|

Splunk Enterprise To change the max_mem_usage_mb setting, follow these steps. Otherwise, contact Splunk Customer Support. If you have a support contract, file a new case using the Splunk Support Portal at Support and Services. Splunk Cloud Platform To change the max_mem_usage_mb setting, request help from Splunk Support. When the limit is reached, the eventstats command processor stops adding the requested fields to the search results.ĭo not set max_mem_usage_mb=0 as this removes the bounds to the amount of memory the eventstats command processor can use. The eventstats search processor uses a nf file setting named max_mem_usage_mb to limit how much memory the eventstats command can use to keep track of information. The eventstats command is a dataset processing command. For an overview about using functions with commands, see Statistical and charting functions. Use the links in the table to see descriptions and examples for each function.

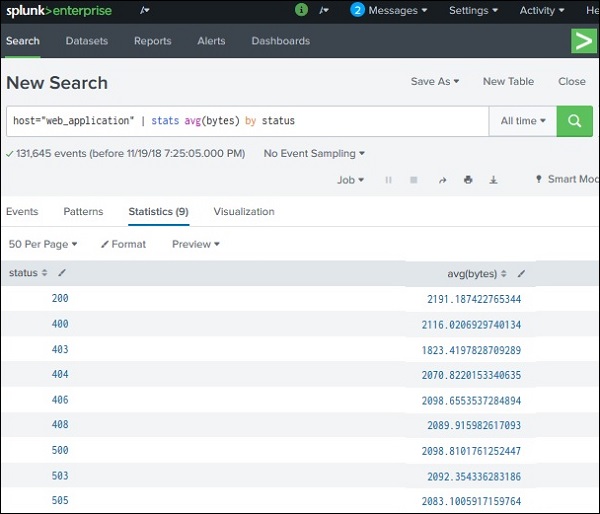

The following table lists the supported functions by type of function. Each time you invoke the eventstats command, you can use one or more functions. Description: Statistical and charting functions that you can use with the eventstats command. Stats function options stats-func Syntax: The syntax depends on the function that you use. Default: false Syntax: BY Description: The name of one or more fields to group by. If you have a BY clause, the allnum argument applies to each group independently. Optional arguments allnum Syntax: allnum= Description: If set to true, computes numerical statistics on each field, if and only if ,all of the values of that field are numerical. You can use wild card characters in field names. Use the AS clause to place the result into a new field with a name that you specify. The function can be applied to an eval expression, or to a field or set of fields. Required arguments Syntax: ( | ) Description: A statistical aggregation function. The generated summary statistics can be used for calculations in subsequent commands in your search. Only those events that have fields pertinent to the aggregation are used in generating the summary statistics. I wouldn't worry about the number of records scanned, if they both got identical results, but I'd make sure the time frames and output results were identical before assuming the code was working apples-to-apples.Generates summary statistics from fields in your events and saves those statistics in a new field. Check the results against each other and make sure they came out identical.

(50k?)įootnote 2 - use at the end of your earliest and latest to make sure the two timelines are exactly the same. It is a transforming command which has a natural limit on how many results it will allow. Then do whatever makes sense.įootnote: Be careful of table.

For overall throughput, slightly more CPU time but all of it on the indexers is far better than slightly less CPU time all on the search head. They are close enough in overall performance that you can go either way and no one will say "Boo" bout it.Ĭheck the details of the run and see how much of that time is on the indexers and how much on the search head. So, given your results, it looks like the results are in alignment with my expectations - dedup is slightly less efficient, as expected, but only slightly so.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed